In the first part of this series, we examined the fundamental paradox: AI systems require perfectly structured data but cannot create the necessary data models themselves. Now let's get more concrete. Why does even the most powerful AI fail to understand what a "customer" or "product" means in a specific company? And why is precisely this definition work the key to success for every AI implementation?

Series: AI-Powered Data Modeling | Part 2 of 4

What Is a "Customer"? It Depends

The question sounds trivial. Every company knows who its customers are, right? In reality, however, it quickly becomes clear: the definition of "customer" is anything but unambiguous – and this is exactly where problems begin for AI-powered approaches.

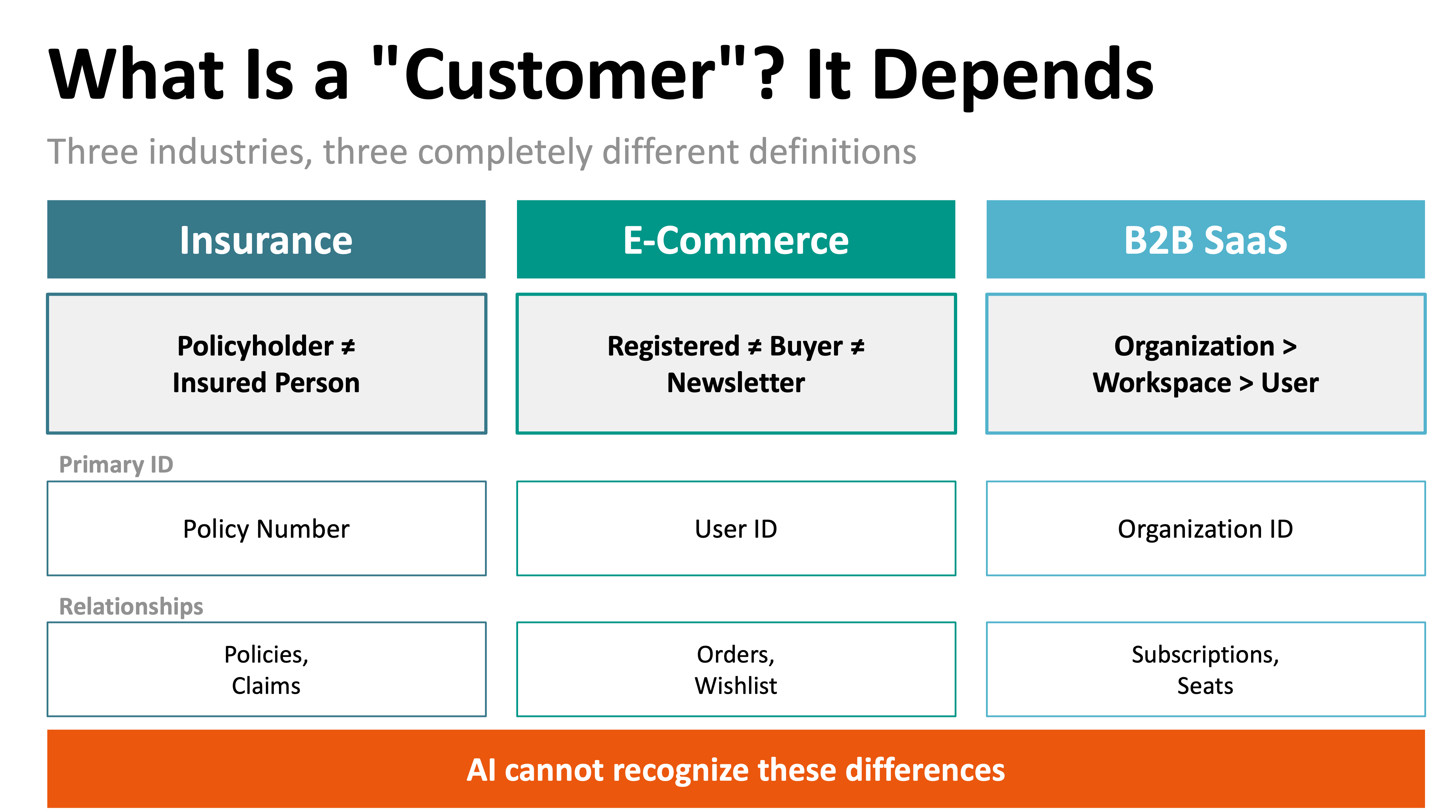

Let's look at three different industries:

Insurance Companies: Here, the distinction between policyholder and insured person is fundamental. The policyholder pays the premiums and is the contract partner. The insured person enjoys the insurance coverage – which may or may not be the same person. With life insurance for children or car insurance where multiple drivers are registered, these roles diverge. A generic AI model would probably suggest a single "Customer" object here – thereby ignoring the business-critical differentiation.

E-Commerce Companies: In online retail, there are different degrees of customer relationships. A registered user has created an account but never bought anything. A buyer has completed at least one transaction. A newsletter subscriber has given consent to communication but may be neither registered nor a buyer. Which of these groups is "the customer"? The answer depends on context: for marketing, all three are relevant; for logistics, only actual buyers; for data privacy, special rules apply to newsletter subscribers.

B2B SaaS Companies: Here it gets even more complex. The paying entity is the organization. Within this organization, there are various workspaces or teams. In each workspace, individual users work. The billing contact is again a different person than the technical administrator or the daily user. Who is "the customer" here? The organization, because it pays? The user, because they use the software? The administrator, because they make decisions? All have different attributes, permissions, and relationships to each other.

These examples show: "Customer" is not a universal term but a highly context-dependent construct. And AI cannot capture precisely this context dependency.

The Illusion of the Generic Model

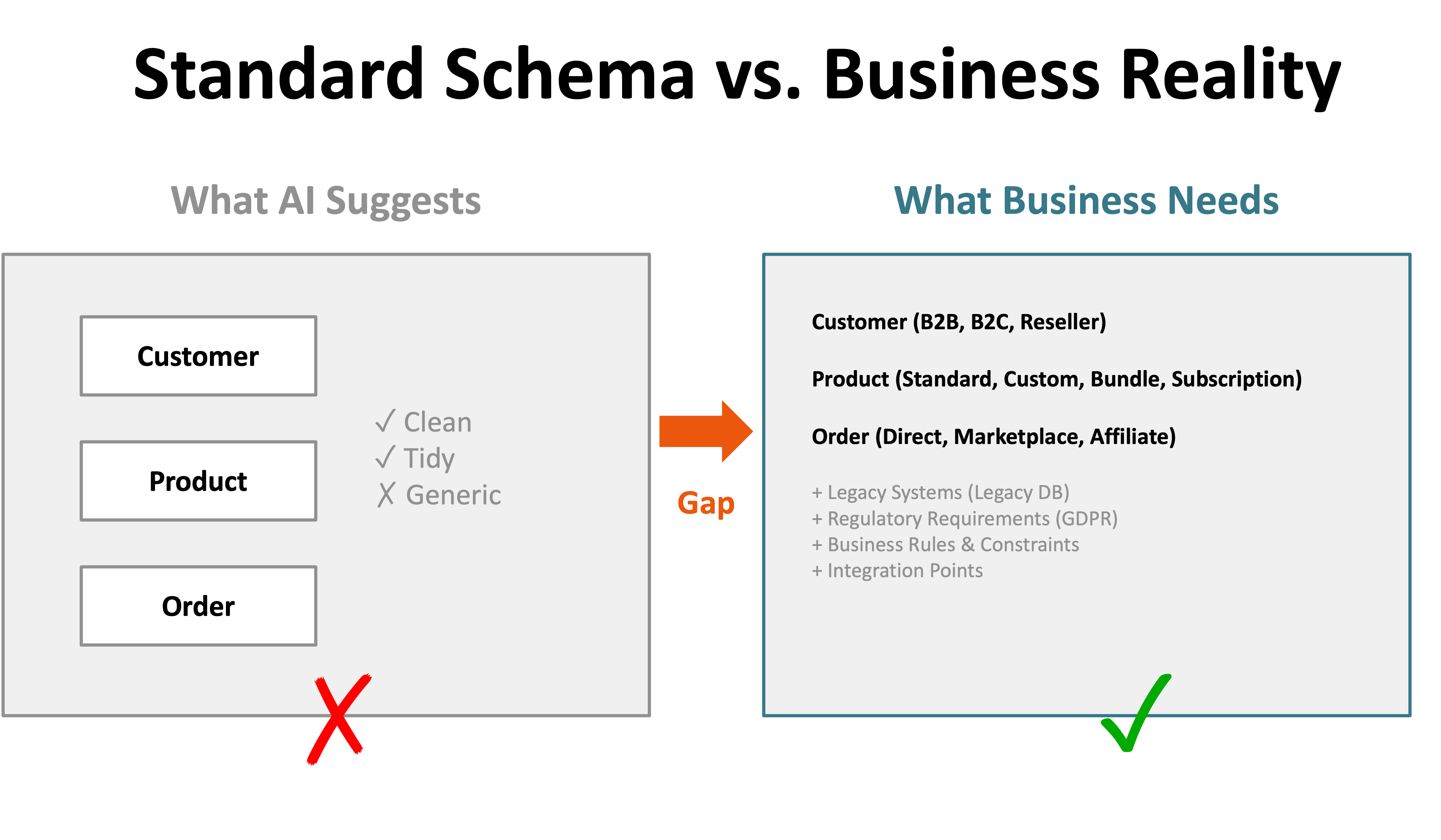

Many AI tools and standard schemas promise to solve exactly this problem. They offer predefined data models for various industries: Retail, Healthcare, Financial Services, Manufacturing. The idea is tempting: you select your industry template, adjust a few parameters, and the data model is ready.

In practice, this rarely works. Why not?

Standard schemas represent the average, not the specific. A generic retail schema might know "Customer," "Product," and "Order." But it knows nothing about the particularities of a company that serves both B2C and B2B. It doesn't consider that some customers come through marketplaces where different data structures apply. It doesn't understand that certain products must be treated differently from a regulatory perspective than others.

Standard schemas represent the average, not the specific. A generic retail schema might know "Customer," "Product," and "Order." But it knows nothing about the particularities of a company that serves both B2C and B2B. It doesn't consider that some customers come through marketplaces where different data structures apply. It doesn't understand that certain products must be treated differently from a regulatory perspective than others.

Differentiation lies in the details. What distinguishes a company from its competitors is often not the basic structure but the specific design. An online retailer for groceries has different requirements for product master data (expiration date, cold chain, allergens) than an electronics retailer (warranty, compatibility, technical specifications). A generic schema cannot capture these nuances.

Historically grown systems have their own logic. Many companies work with data structures that have evolved over years or decades. Mergers, acquisitions, system migrations – all of this leaves traces in the data. A "clean" AI generation would ignore this historical dimension and thus deliver models that are practically unusable because they don't fit the existing IT landscape.

About This Series: This is Part 2 of 4 in our series on AI-powered data modeling. Part 1 examines the fundamental paradox. Part 3 presents a hybrid approach that combines AI speed with human expertise.

Want Deeper Understanding?

In our Data Modeling Training, you'll learn how to develop company-specific data models – beyond generic templates and standard schemas.

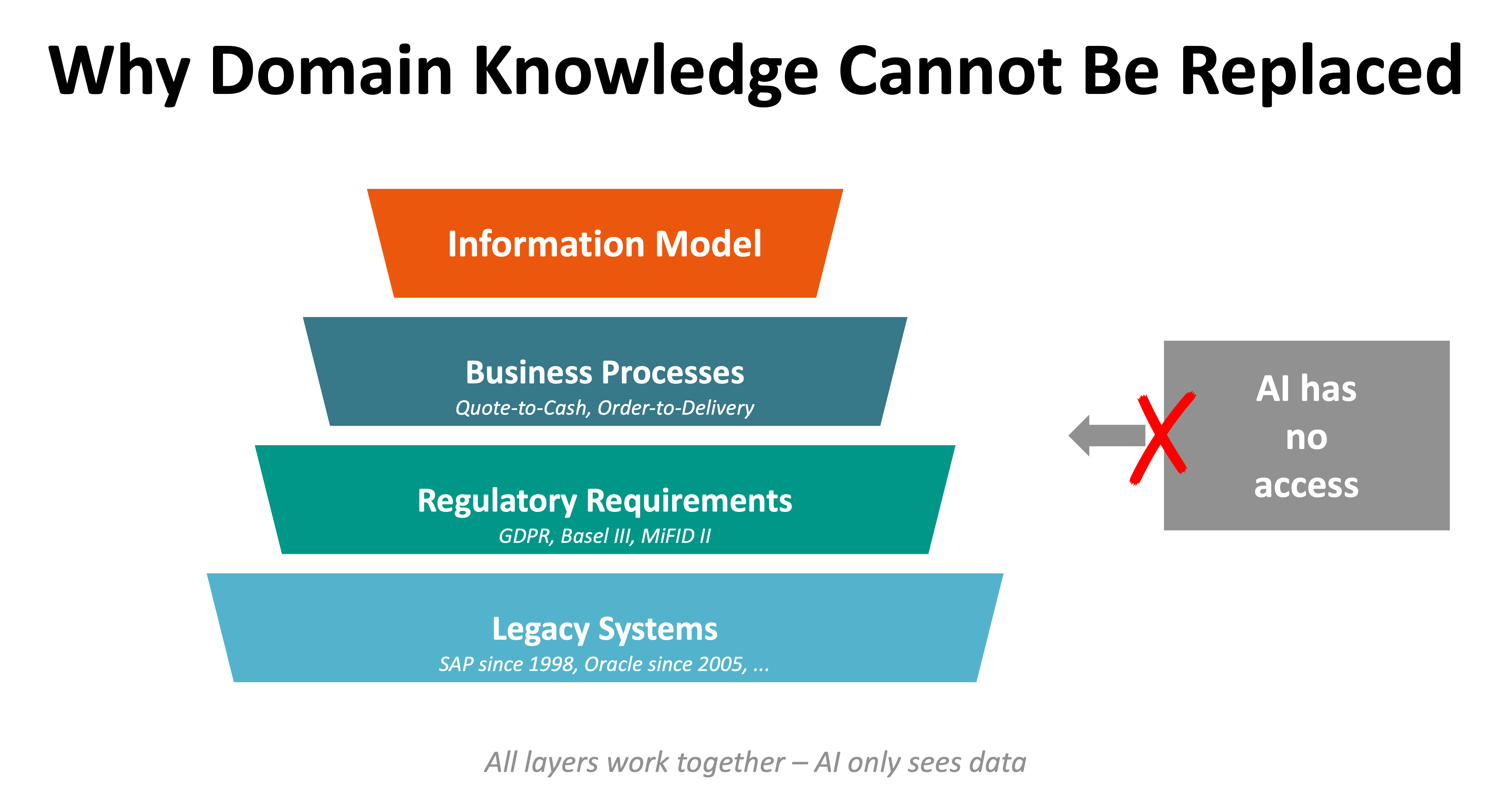

Why Domain Knowledge Cannot Be Replaced

The limits of AI automation become particularly clear when we look at everything that goes into defining a business object:

Business processes determine data structures. Take the example of "Order" in manufacturing. For a company that produces to stock, an order is a relatively simple construct: customer orders product X in quantity Y for delivery on date Z. For a contract manufacturer, however, every order is unique, with specific requirements, approval processes, change histories, and quality checks. The data structure must reflect this process reality – and only those who understand the process know it.

Regulatory requirements are highly specific. A pharmaceutical company must ensure complete batch traceability for its products – legally mandated. A financial services provider must retain certain customer data for compliance purposes for years, delete others according to GDPR. A medical device manufacturer is subject to strict documentation requirements. These regulatory frameworks flow directly into data modeling – and they are (maybe) not part of any AI's training dataset.

Regulatory requirements are highly specific. A pharmaceutical company must ensure complete batch traceability for its products – legally mandated. A financial services provider must retain certain customer data for compliance purposes for years, delete others according to GDPR. A medical device manufacturer is subject to strict documentation requirements. These regulatory frameworks flow directly into data modeling – and they are (maybe) not part of any AI's training dataset.

Historically grown systems demand integration, not revolution. Very few companies can or want to renew their entire IT landscape at once. New data models must be able to communicate with existing systems. This means: you must understand what data formats the legacy systems speak, what integration points exist, what transformations are necessary. An AI that suggests an "optimal" model without regard to existing infrastructure doesn't help.

The Fundamental Difference: Syntax vs. Semantics

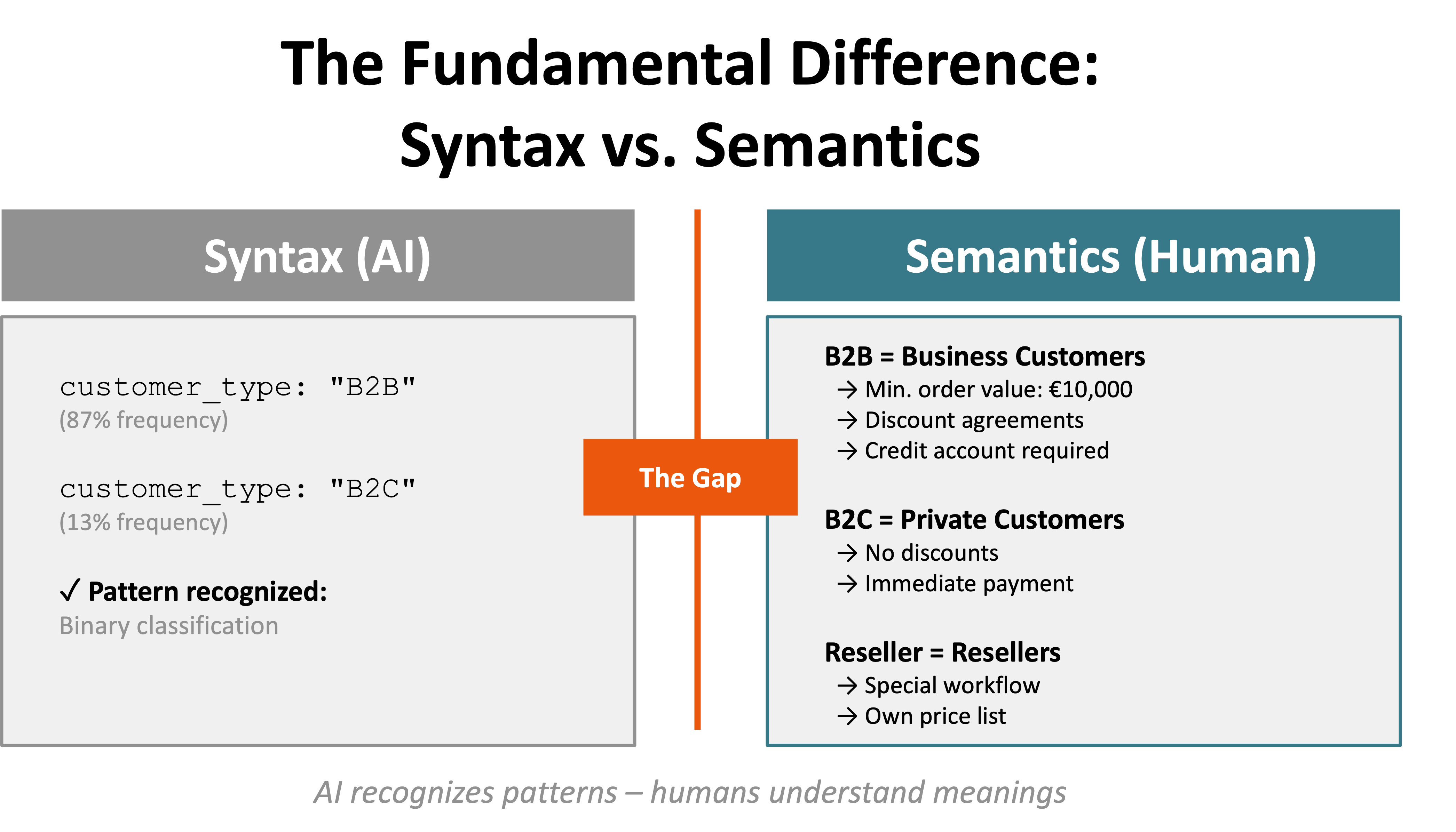

Here we get to the core of the problem. AI systems – including Large Language Models – work at the level of patterns and probabilities. They recognize that certain terms frequently occur together, that certain structures are typical for certain contexts. This is syntax: the formal structure of data.

Here we get to the core of the problem. AI systems – including Large Language Models – work at the level of patterns and probabilities. They recognize that certain terms frequently occur together, that certain structures are typical for certain contexts. This is syntax: the formal structure of data.

What AI cannot do: understand semantics. The meaning of a term in a specific business context cannot be derived from frequency distributions. If "customer_type" in a database usually has the values "B2B" or "B2C," an AI can recognize the pattern. But it doesn't know that "B2B" in this company means customers with a minimum order value of €10,000, while "B2C" only includes direct customers without discount agreements – and that there's additionally the category "Reseller," which is treated differently than both.

A human data modeler brings this contextual understanding. They ask: "What does B2B mean in your process? What attributes are relevant for this distinction? How does this affect downstream systems?" AI cannot ask these questions – at least not in a form that leads to usable answers.

What This Means in Practice

Does this mean that AI is completely useless for data modeling? Not at all. But it means we must deploy AI where its strengths lie – and not where fundamental business understanding is required.

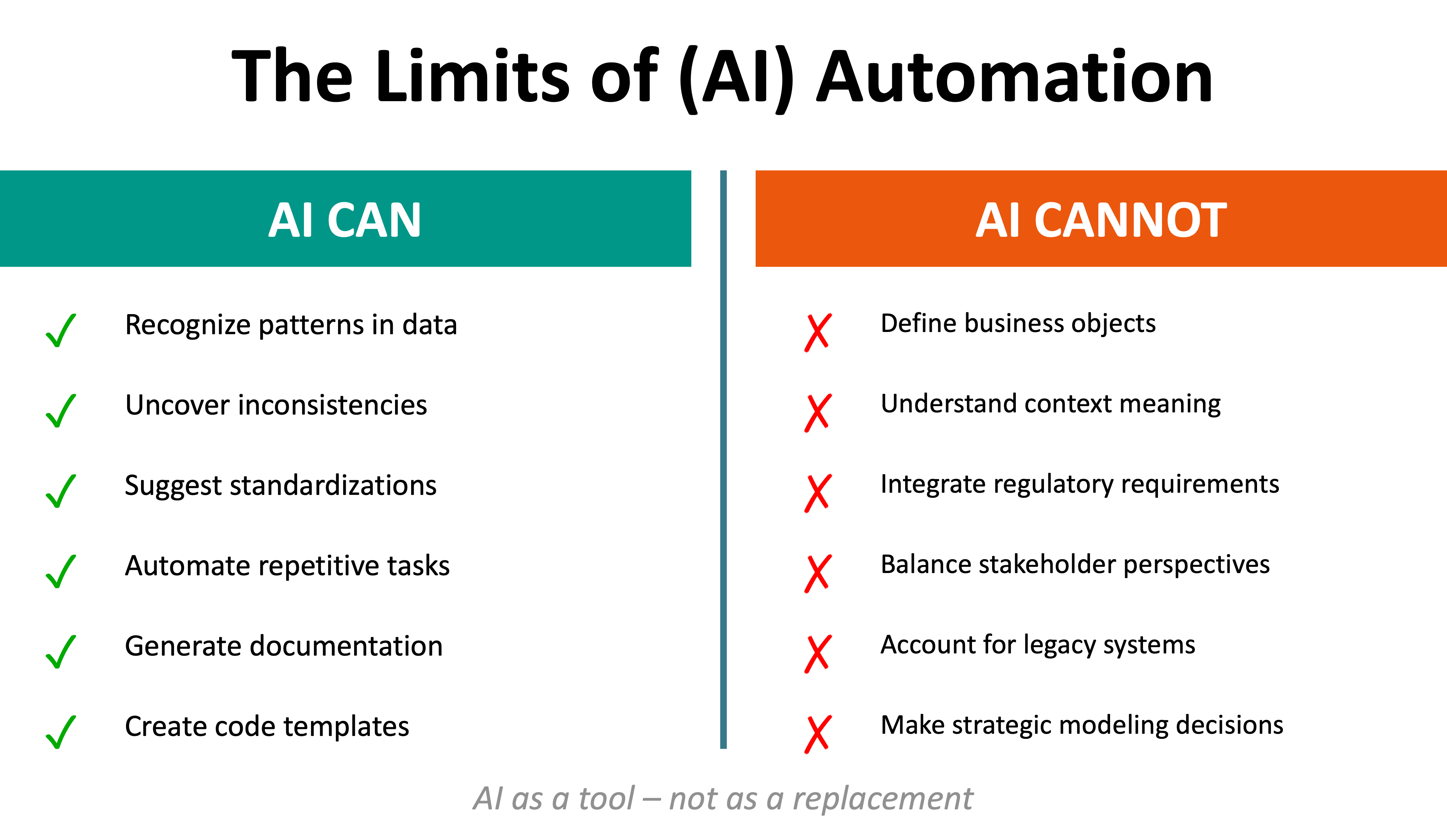

AI can excellently help recognize patterns in existing data structures, uncover inconsistencies, make suggestions for standardizations. It can automate repetitive tasks, generate documentation, create code templates. All of this significantly accelerates the work of data modelers.

AI can excellently help recognize patterns in existing data structures, uncover inconsistencies, make suggestions for standardizations. It can automate repetitive tasks, generate documentation, create code templates. All of this significantly accelerates the work of data modelers.

What AI cannot do: make the strategic decisions that constitute a data model. Which business objects are central? How are they defined? What relationships exist between them? Which attributes are business-critical? These questions require domain knowledge, process understanding, and the ability to integrate different stakeholder perspectives.

In the next article in this series, we'll show a concrete hybrid approach: How can data modelers use AI to accelerate their work – without giving up control over the crucial definitions? This involves the practical use of AI in requirements analysis, templates and validation, and the question of which tasks can be automated and which require human expertise.