In the past, I've always had a need for large data sets to test data logistics processes or a database technology for Data Vault.

Not only at customers' sites, though, but also to prepare and write a blog post, perform a demo or a webinar.

As a result of my collaboration with EXASOL, I focused on the TPC-H tool dbgen to generate gigabytes of data and to test the read and write performance with Data Vault on EXASOL.

To share my experience with the TPC-H benchmark and dbgen in generating large datasets, I wrote this blog post as a step-by-step guide.

Preparation

The TPC-H benchmark tool dbgen is required to generate large data sets. This is provided on the TPC-Website. For this purpose, the source code for the TPC-H benchmark must be downloaded via the menu Downloads -> Download Programs and Specifications (currently this is version 3.0.1 - a link to the download will be sent by e-mail).

On Windows, it is possible to download the Visual Studio Community Edition to compile the source code and create the dbgen executable.

On MacOS, the provided compiler clang/clang++ can be used.

The downloaded zip file needs to be unpacked. For example, I have unpacked all files into the folder ./TPC-H V3.0.1/.

Compilation on Windows

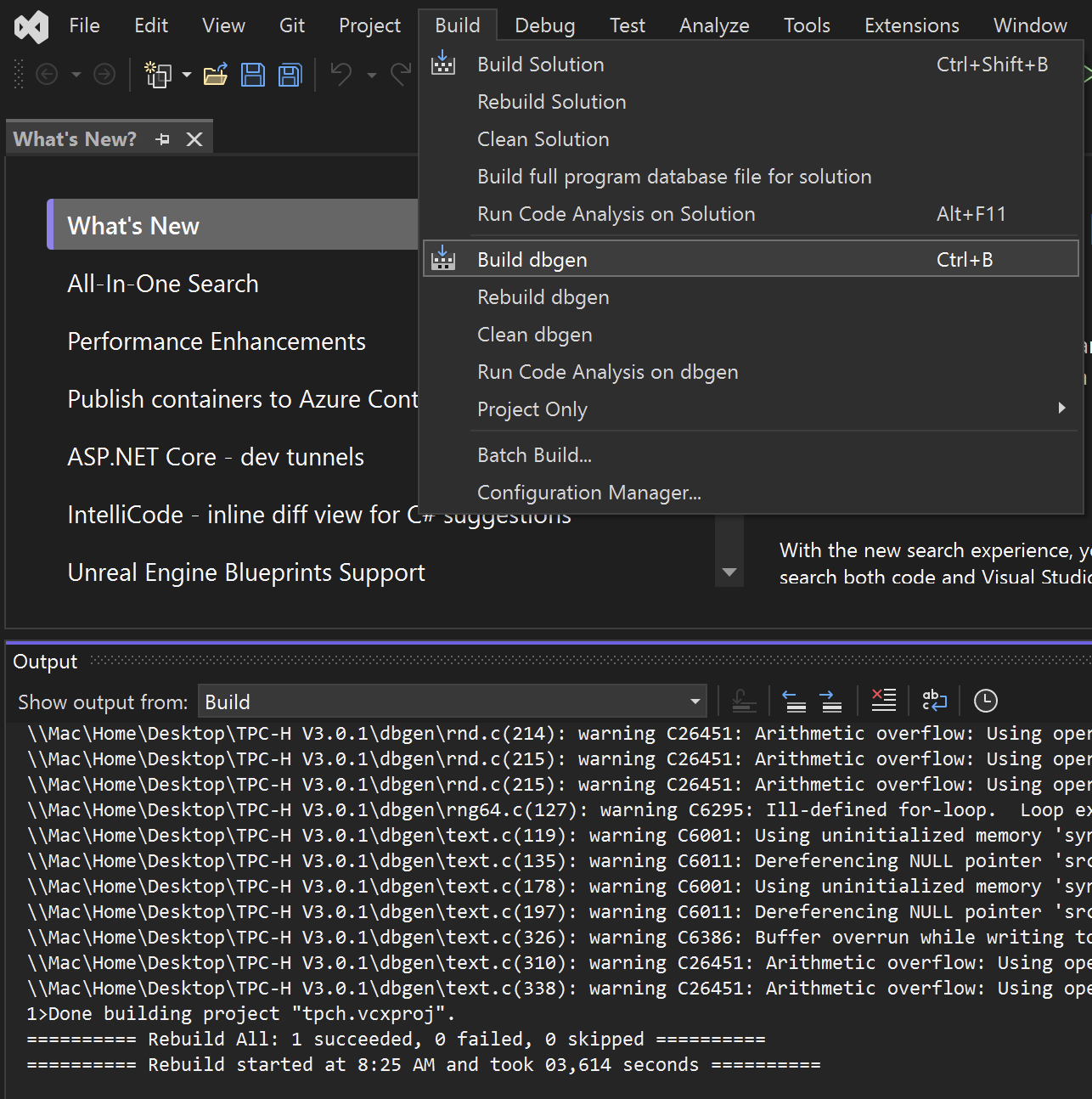

Open the project file tpch.sln in the directory /TPC-H V3.0.1/dbgen/ on Windows with Visual Studio.

With Build dbgen (Ctrl-B), see the following picture, dbgen can be compiled.

There will be some warnings, but so far everything seems to be ok. dbgen has been created and is now located in the ./TPC-H V3.0.1/dbgen/Debug/ directory.

The dbgen.exe file should be copied or moved up one level from folder ./TPC-H V3.0.1/dbgen/Debug/ to folder ./TPC-H V3.0.1/dbgen/ to prevent errors when running dbgen.

Compilation on MacOS

To compile dbgen on MacOS, the file makefile.suite must be modified. This is located in the directory /TPC-H V3.0.1/dbgen/.

For the compiler clang and the database MS SQLServer I have modified the file as follows:

################

## CHANGE NAME OF ANSI COMPILER HERE

################

CC = clang

# Current values for DATABASE are: INFORMIX, DB2, TDAT (Teradata)

# SQLSERVER, SYBASE, ORACLE, VECTORWISE

# Current values for MACHINE are: ATT, DOS, HP, IBM, ICL, MVS,

# SGI, SUN, U2200, VMS, LINUX, WIN32

# Current values for WORKLOAD are: TPCH

DATABASE= SQLSERVER

MACHINE = LINUX

WORKLOAD = TPCH

To eliminate the error message 'malloc.h' file not found, two files need to be modified:

- bm_utils.c

- varsub.c

In both files #include <malloc.h> must be changed to #include <sys/malloc.h> to make the error message disappear.

dbgen is compiled with make -f makefile.suite. There are a few warnings, but so far everything seems fine. The program dbgen has been built and is now located in the directory ./TPC-H V3.0.1/dbgen/.

Test of dbgen

For testing, dbgen can be run with the -h option in the terminal or in PowerShell within the directory ./TPC-H V3.0.1/dbgen/ and the dbgen help will be displayed:

% ./dbgen -h

TPC-H Population Generator (Version 3.0.0 build 0) Copyright Transaction Processing Performance Council 1994 - 2010 USAGE: dbgen [-{vf}][-T {pcsoPSOL}] [-s <scale>][-C <procs>][-S <step>] dbgen [-v] [-O m] [-s <scale>] [-U <updates>] Basic Options =========================== -C <n> -- separate data set into <n> chunks (requires -S, default: 1) -f -- force. Overwrite existing files -h -- display this message -q -- enable QUIET mode -s <n> -- set Scale Factor (SF) to <n> (default: 1) -S <n> -- build the <n>th step of the data/update set (used with -C or -U) -U <n> -- generate <n> update sets -v -- enable VERBOSE mode Advanced Options =========================== -b <s> -- load distributions for <s> (default: dists.dss) -d <n> -- split deletes between <n> files (requires -U) -i <n> -- split inserts between <n> files (requires -U) -T c -- generate cutomers ONLY -T l -- generate nation/region ONLY -T L -- generate lineitem ONLY -T n -- generate nation ONLY -T o -- generate orders/lineitem ONLY -T O -- generate orders ONLY -T p -- generate parts/partsupp ONLY -T P -- generate parts ONLY -T r -- generate region ONLY -T s -- generate suppliers ONLY -T S -- generate partsupp ONLY To generate the SF=1 (1GB), validation database population, use: dbgen -vf -s 1 To generate updates for a SF=1 (1GB), use: dbgen -v -U 1 -s 1

Generation of sample data with dbgen

When dbgen is run without any other options, a GB of data is generated, which is distributed to eight different files ending with *.tbl (tables):

- Customers

- Land

- Lineitem

- Orders

- Part

- Partsupp

- Region

- Supplier

# Dateien für 1GB Daten

24346144 25 Apr 18:10 customer.tbl

759863287 25 Apr 18:10 lineitem.tbl

2224 25 Apr 18:10 nation.tbl

171952161 25 Apr 18:10 orders.tbl

24135125 25 Apr 18:10 part.tbl

118984616 25 Apr 18:10 partsupp.tbl

389 25 Apr 18:10 region.tbl

1409184 25 Apr 18:10 supplier.tbl

In order to obtain information during data generation, it is recommended to activate the verbose mode. This is especially true if large sample data sets with several 100 GB are to be created. This can take some time.

If more data than the default 1 GB needs to be generated, use the -s <n> (scaling factor (SF)) option. By default, <n> is set to 1 and generates 1 GB of data. Therefore, a scale factor -s 10 would generate 10 GB and a scale factor -s 100 would generate 100 GB of data.

To generate 500 GB of data, the following command could be used to run dbgen:

% ./dbgen -vf -s 500

TPC-H Population Generator (Version 3.0.0)

Copyright Transaction Processing Performance Council 1994 - 2010

Generating data for suppliers table/

Preloading text ... 100%

done.

Generating data for customers tabledone.

Generating data for orders/lineitem tablesdone.

Generating data for part/partsupplier tablesdone.

Generating data for nation tabledone.

Generating data for region tabledone.

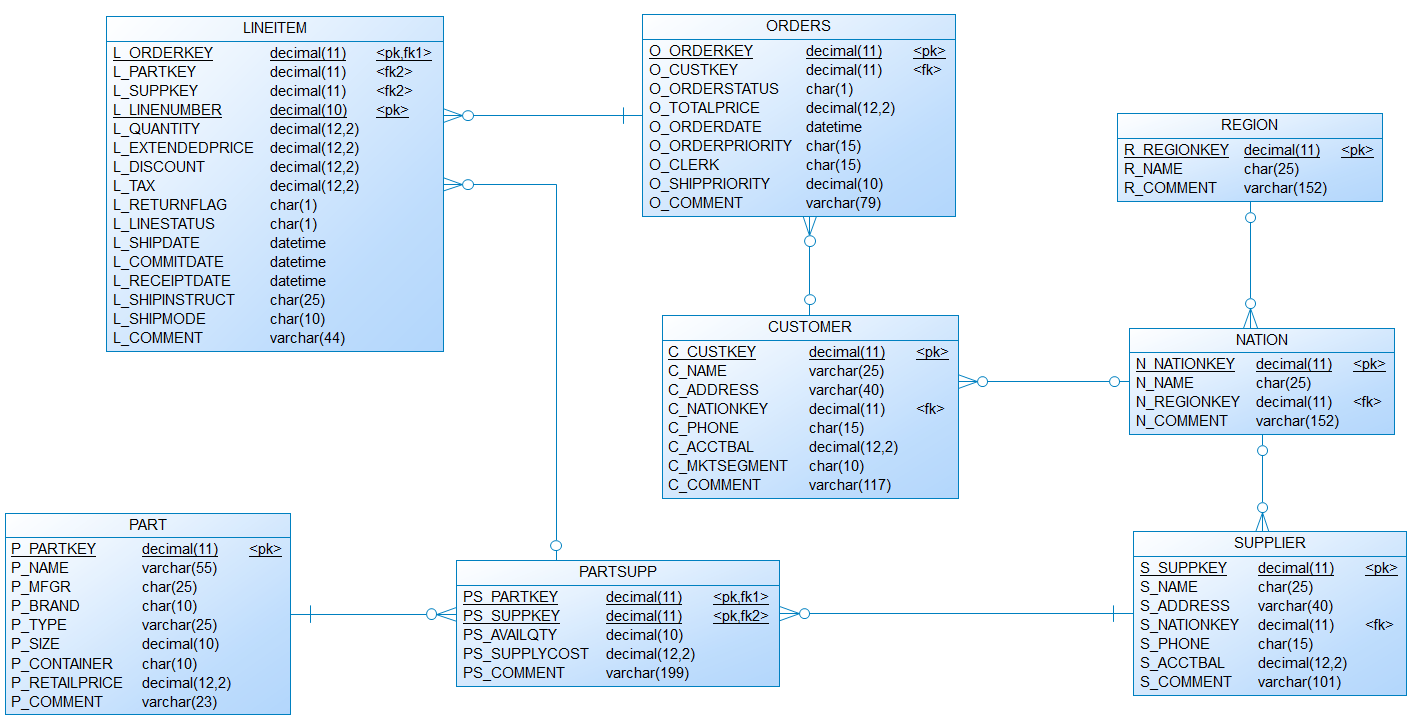

Data model for the TPC-H sample data

The data definition file (dss.ddl) and the referential integrity file (dss.ri) to create the tables for the TPC-H are located in the directory ./TPC-H V3.0.1/dbgen/.

To make the work easier, I have created a data model (see picture below) and DDLs for different databases, which are welcome to be downloaded and used:

Loading the TPC-H sample data

Now just load the data into the data model and run tests or experiments using this data. Have fun with it!

Also for this, to make the work easier, I have created scripts that are welcome to download and use:

And that's it folks. There are some upcoming blogposts on Data Vault with EXASOL and other interesting performance topics. Stay tuned.

Please leave comments if you need more information or examples of other databases.

So long,

Dirk