DWH

-

18. TDWI Roundtable Frankfurt

Ende April habe ich, als Head of Competence – Agile Data Warehousing bei BLUEFORTE, einen Vortrag über Data Vault beim 18. TDWI Roundtable in Frankfurt/Main gehalten. Die Atmosphäre war super, die Organisation durch das TDWI toll und das Publikum ist mit Leidenschaft in Diskussionen eingestiegen.

-

Andventorial - Die moderne Antwort

Adventorial - Ein Online Themen Special Data Vault

Geschäftsanforderungen oder neudeutsch gesprochen Business Needs verändern sich in heutigen Unternehmen in sehr kurzen Intervallen. Was gestern noch als gesetzt galt, kann morgen schon wieder vorbei sein. Fachabteilungen fordern in immer kürzeren Abständen die Bereitstellung geeigneter Daten, um Entscheidungen zu treffen. Feste und starre Regeln werden bewusst gebrochen, um etwas Neues zu entdecken. -

Data Model Scorecard

Objective review and data quality goals of data models

Did you ever ask yourself which score your data model would achieve? Could you imagine 90%, 95% or even 100% across 10 categories of objective criteria?

No?

Yes?Either way, if you answered with “no” or “yes”, recommend using something to test the quality of your data model(s). For years there have been methods to test and ensure quality in software development, like ISTQB, IEEE, RUP, ITIL, COBIT and many more. In data warehouse projects I observed test methods testing everything: loading processes (ETL), data quality, organizational processes, security, …

But data models? Never! But why? -

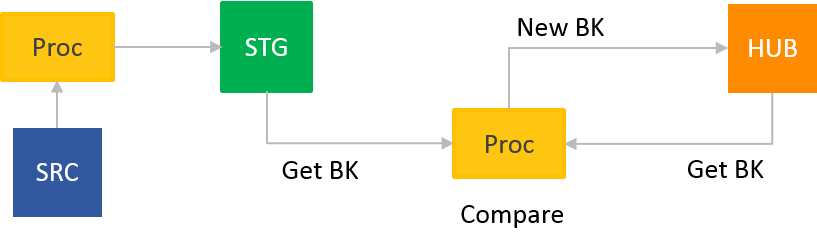

Data Vault KISS - Keep it Small and Simple

KISS –

K – Keep (Data Vault)

I – It (ETL in your Data Warehouse)

S – Small (lightweight processes aka short and easy SQL)

S – and simple (easy Inserts and Updates)

-

Data Vault on EXASOL - Modeling and Implementation

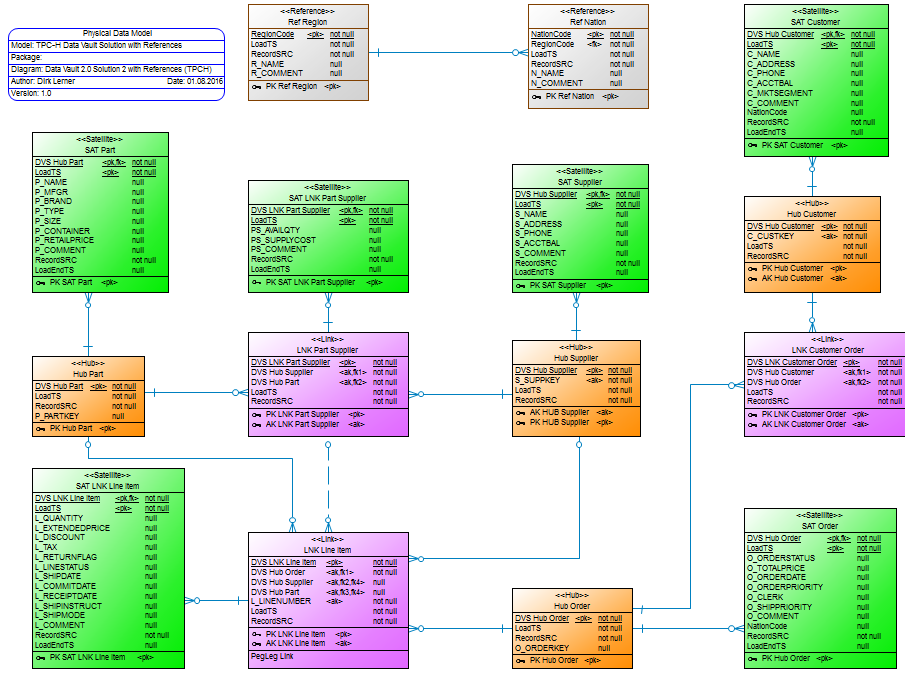

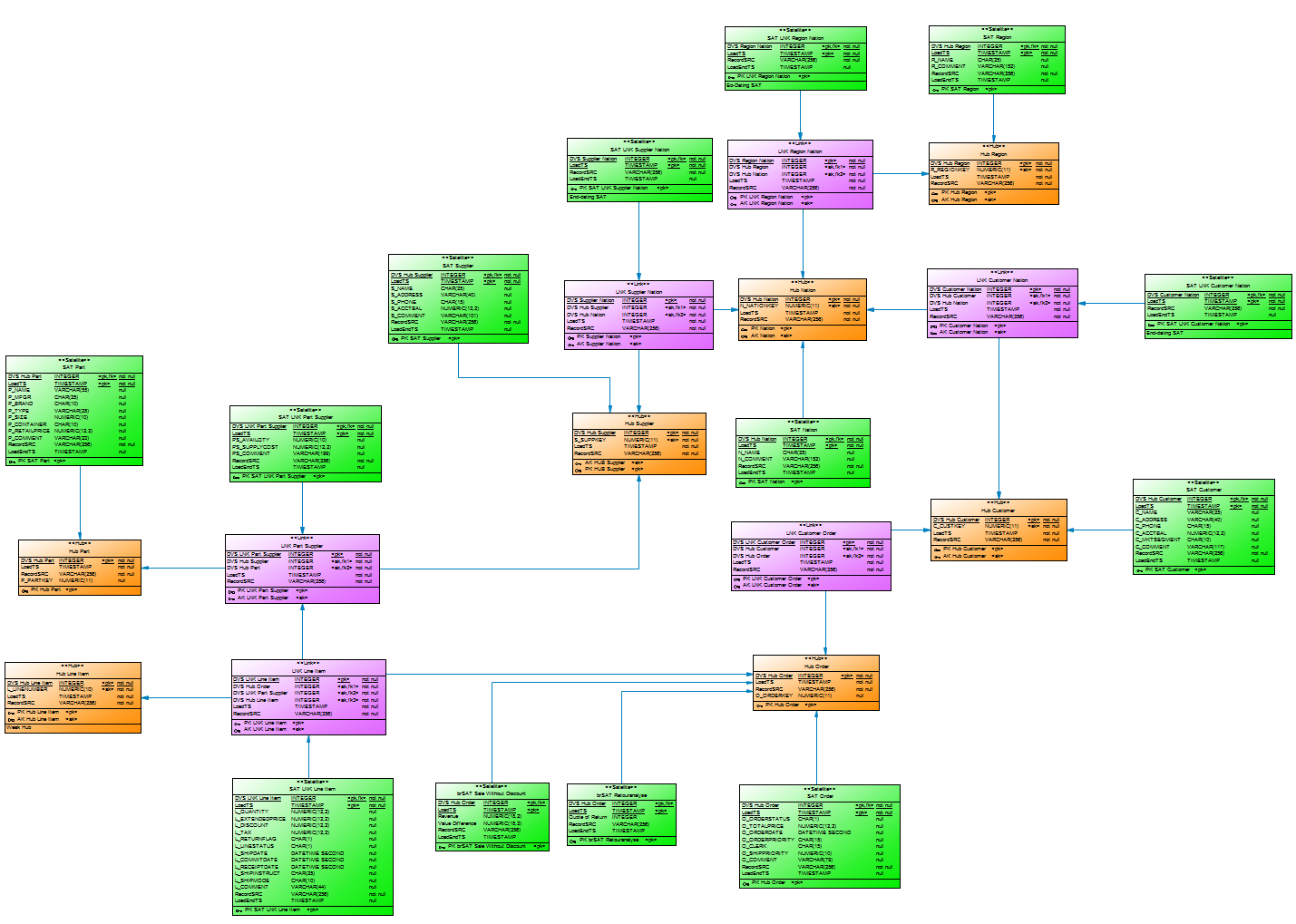

On July 15, Mathias Brink and I ran a webinar about Data Vault on EXASOL, modeling and implementation. The webinar started with an overview of the concepts of Data Vault Modeling and how Data Vault Modeling enables agile development cycles. Afterwards, we showed a demo that transformed the TPC-H data model into a Data Vault data model and how you can then query the data out of the Data Vault data model. The results were then compared with the original queries of the TPC-H.

-

e-Business Intelligence: Leveraging DB2 for Linux on S/390

Im Jahr 2001 hatte ich die große Chance an einem Redbook bei IBM mitzuschreiben. Was für eine tolle Sache! Ich habe nicht lange überlegt, sondern sofort zugestimmt.

Das Thema war, die Universal DB2 auf einer S/390 mit SuSE Linux zu installieren und Performance Tests darauf auszuführen.

-

High performance - Data Vault and Exasol

You may have received an e-mail invitation from EXASOL or from ITGAIN inviting you to our forthcoming webinar, such as this:

Do you have difficulty incorporating different data sources into your current database? Would you like an agile development environment? Or perhaps you are using Data Vault for data modeling and are facing performance issues?

If so, then attend our free webinar entitled “Data Vault Modeling with EXASOL: High performance and agile data warehousing.” The 60-minute webinar takes place on July 15 from 10:00 to 11:00 am CEST. -

High-performance Data Vault

Over the last few weeks, Mathias Brink and I have worked hard on the topic of Data Vault on EXASOL.

Our (simple) question: How does EXASOL perform with Data Vault?

First, we had to decide what kind of data to run performance tests against in order to get a feeling for the power of this combination. And we decided to use the well-known TPC-H benchmark created by the non-profit organisation TPC.

Second, we built a (simple) Data Vault model and loaded 500 GB of data into the installed model. And to be honest, it was not the best model. On top of it we built a virtual TPC-H data model to execute the TPC-H SQLs in order to analyse performance.

-

How to load easy some data vault test data

Some time ago a customers asked me how to load easy and simple some (test)data into their database XYZ (chose the one of your choice and replace XYZ) to test their new developed Data Vault logistic processes.

The point was: They don’t want to use all this ETL-tool and IT-processes overhead just to some small test in their own environment. If this this is well done from a data governance perspective? Well, that’s not part of this blogpost. Just do this kind of thingis only in your development environment. -

Increased quality and sped up process

Dirk Lerner was engaged to coach my efforts of creating a data warehouse using data vault 2.0 architecture. It has been a pleasure to work with Dirk.

-

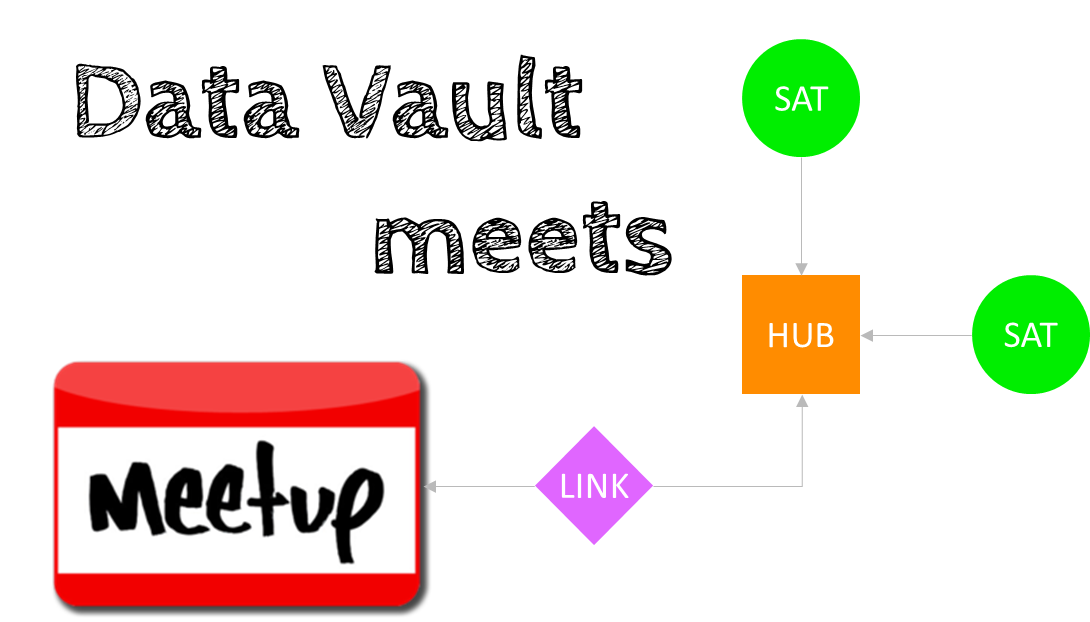

Meetup – Data Vault Interest Group

I reactivated my Meetup Data Vault Interest Group this week. Long time ago I was thinking about a table of fellow regulars to network with other, let’s call them Data Vaulters. It should be a relaxed get-together, no business driven presentation or even worse advertisement for XYZ tool, consulting or any flavor of Data Vault. The feedback of many people was that they want something different to the existing Business Intelligence Meetings. So, here it is!

-

Mehr Qualität und beschleunigte Prozesse

Dirk Lerner wurde damit beauftragt, mich bei der Erstellung eines Data Warehouse unter Verwendung der Data Vault 2.0 Architektur zu unterstützen.Es war ein Vergnügen, mit Dirk zu arbeiten.

-

Model driven decision making @ #BAS19

FastChangeCo and the Fast Change in a Hybrid Cloud Data Warehouse with elasticity

What is this 20 minute talk about at #BAS19?

The fictitious company FastChangeCo has developed a possibility not only to manufacture Smart Devices, but also to extend the Smart Devices as wearables in the form of bio-sensors to clothing and living beings. With each of these devices, a large amount of (sensitive) data is generated, or more precisely: by recording, processing and evaluating personal and environmental data.

-

Modeling the Agile Data Warehouse with Data Vault

Dieses Buch ist ein MUSS für alle, die an Data Vault interessiert sind und auch für alle die sich für Business Intelligence und (Enterprise) Data Warehouse begeistern.

Es ist aus meiner Sicht toll geschrieben: leicht verständlich und es sind alle Themen rund um Data Vault sehr gut erklärt. -

The Data Doctrine

Message: Thank you for signing The Data Doctrine!

What a fantastic moment. I’ve just signed The Data Doctrine. What is the data doctrine? In a similar philosophy to the Agile Manifesto it offers us data geeks a data-centric culture:

Value Data Programmes1 Preceding Software Projects

Value Stable Data Structures Preceding Stable Code

Value Shared Data Preceding Completed Software

Value Reusable Data Preceding Reusable CodeWhile reading the data doctrine I saw myself looking around seeing all the lost options and possibilities in data warehouse projects because of companies, project teams, or even individuals ignoring the value of data by incurring the consequences. I saw it in data warehouse projects, struggling with the lack of stable data structures in source systems as well as in the data warehouse. In a new fancy system, where no one cares about which, what and how data was generated. And for a data warehouse project even worse, is the practice of keeping data locked with access limited to a few principalities of departments castles.

All this is not the way to get value out of corporate data, and to leverage it for value creation.As I advocate flexible, lean and easily extendable data warehouse principles and practices, I’ll support the idea of The Data Doctrine to evolve the understanding for the need of data architecture as well as of data-centric principles.

So long,

Dirk1To emphasize the point, we (the authors of The Data Doctrine) use the British spelling of “programme” to reinforce the difference between a data programme, which is a set of structured activities and a software program, which is a set of instructions that tell a computer what to do (Wikipedia, 2016).