FastChangeCo and the Fast Change in a Hybrid Cloud Data Warehouse with elasticity

What is this 20 minute talk about at #BAS19?

The fictitious company FastChangeCo has developed a possibility not only to manufacture Smart Devices, but also to extend the Smart Devices as wearables in the form of bio-sensors to clothing and living beings. With each of these devices, a large amount of (sensitive) data is generated, or more precisely: by recording, processing and evaluating personal and environmental data.

Or how to successfully destroy every level of the data warehouse

Are you pampered with success and tired of the eternal pat on the back? You don't want to be so successful with your first attempt at implementing a Data Vault project that all your colleagues become jealous?

This blog post will be a review of the Global Data Summit. But first I would like to lose a few sentences about the Advanced Data Vault & Ensemble Modelling meeting, organized by Hans Hultgren and Remco Broekmann. It is an event that brought together experienced data modelers from all over the world (New Zealand, South Africa, Europe and the USA) around Data Vault, Focal and Ensemble Logical Modeling (ELM). That sounds promising, doesn't it?

Advanced Data Vault & Ensemble Modelling

There were two interesting days with many topics around Data Vault and ELM, which were put up for discussion. The idea behind the meeting is to think further, e. g. where to go with Data Vault and ELM and what the participants can contribute from their own experiences. I personally find some of the points discussed very interesting and exciting and should be pursued further. With others I have to go into myself and continue researching. Most likely, I'll go into other blog posts on different topics, such as the discussion about concatenated key, partitioned links or how you can do without satellites on links. I will see.

As already mentioned in my previous blogpost I will give a talk at the first day of the Data Modeling Zone 2017 about temporal data in the data warehouse.

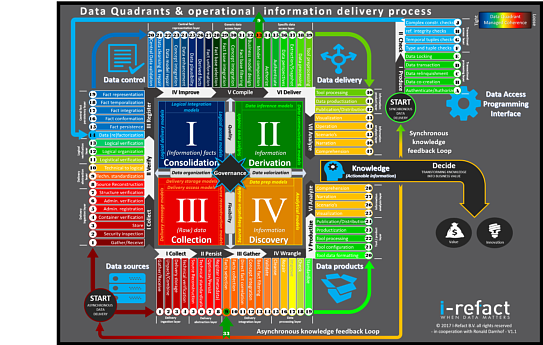

Another interesting talk will take place on the third day of the DMZ 2017: Martijn Evers will give a full day session about Full Scale Data Architects.

Ahead of this session there will be a Kickoff Event sponsored by I-Refact, data42morrow and TEDAMOH: At 6 pm on Tuesday, 24. October, after the second day of the Data Modeling Zone 2017, all interested people can meet up and join the launch of the German chapter of Full Scale Data Architects.

After all, I am very happy to be a speaker at this year's Data Modeling Zone in Düsseldorf. Again, like at the Global Data Summit, I'm talking about one of my favorite topics: Temporal data in the data warehouse, especially in connection with data vault and dimensional modeling.

I am very pleased to be speaking at the Global Data Summit in Golden, Colorodo this year. I am talking about one of my favorite topics: Temporal data in the data warehouse, especially in connection with data vault and dimensional modeling. The title is:

Bitemporal modeling for the Agile Data Warehouse

The talk is a 5x5 presentation, that is 5 slides in 5 minutes. Afterwards, the participants have the opportunity to discuss the topic intensively with me in a 90-minute whiteboard session.

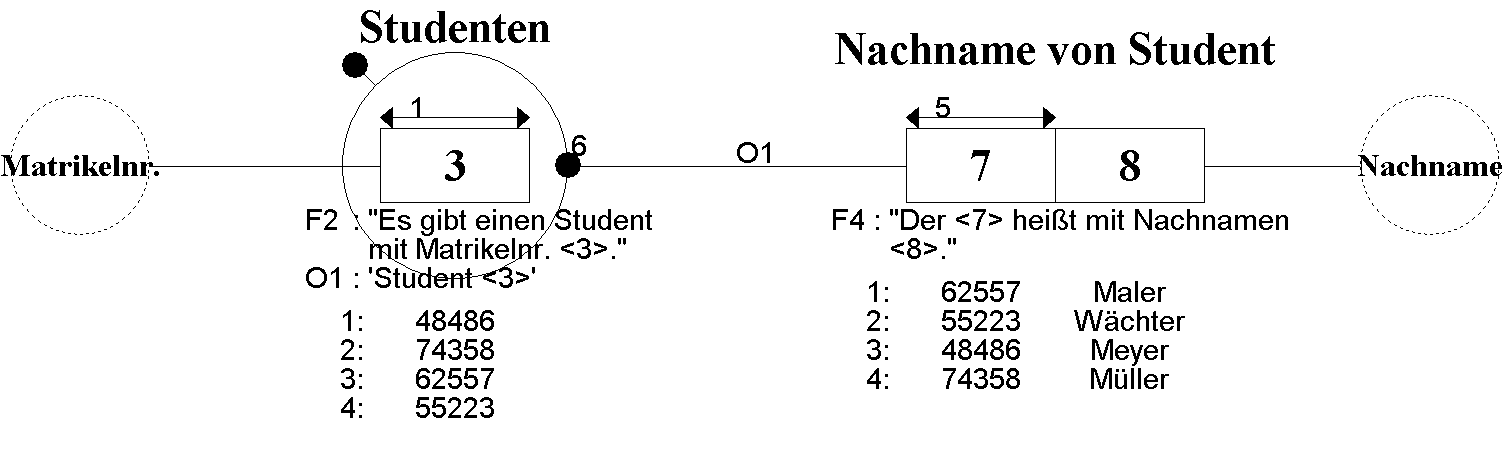

Months ago I talked to Stephan Volkmann, the student I mentor, about possibilities to write a seminar paper. One suggestion was to write about Information Modeling, namely FCO-IM, ORM2 and NIAM, siblings of the Fact-Orietented Modeling (FOM) family. In my opinion, FOM is the most powerful technique for building conceptual information models, as I wrote in a previous blogpost Sketch Notes Reflections at TDWI Roundtable with FCO-IM.

Page 6 of 13