Data Architecture

-

A comment

In recent weeks I have read so many pessimistic and negative articles and comments in the social media about the state of data modeling in companies in Germany, but also worldwide.

Why? I don't know. I can't understand it.

I know many companies that invest a lot of time in data modeling because they have understood the added value. I know many companies that initially rejected data modeling as a whole, but understood its benefits through convincing and training.

Isn't it the case that we (consultants, managers, project managers, subject-matter experts, etc.) should have a positive influence on data modeling? To support our partners in projects in such a way that data modeling becomes a success? If we ourselves do not believe that data modeling is a success, then who does?

-

Bitemporal Data

If everything would happen at the same time, there would be no need to store historic data. We, the consumers of data, would know each and everything at the same instant. Beside all the other philosophical impacts, if time wouldn’t exists, is data still necessary?

(Un)fortunately time exists and data architects, data modelers and developers have to deal with it in the world of information technology.

In this category about temporal data I will collect all my blogposts about this fancy topic.

-

Blog

-

Data Architecture

-

Full Scale Data Architects at DMZ 2017

As already mentioned in my previous blogpost I will give a talk at the first day of the Data Modeling Zone 2017 about temporal data in the data warehouse.

Another interesting talk will take place on the third day of the DMZ 2017: Martijn Evers will give a full day session about Full Scale Data Architects.

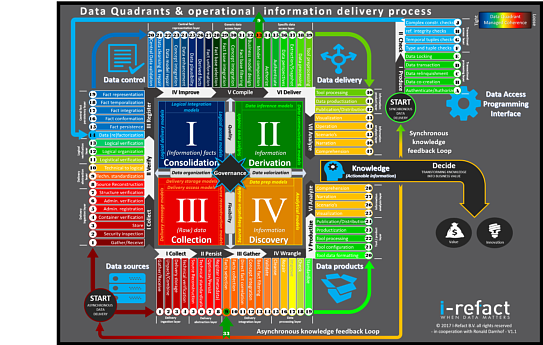

Ahead of this session there will be a Kickoff Event sponsored by I-Refact, data42morrow and TEDAMOH: At 6 pm on Tuesday, 24. October, after the second day of the Data Modeling Zone 2017, all interested people can meet up and join the launch of the German chapter of Full Scale Data Architects.

-

Secret Spice

-

Temporal data series

/ SECRET SPICE - Temporal Data Series

Temporal data - A time travel

In loose order I publish my thoughts and experiences around bitemporal or more general temporal data. For me, this includes not only the properties and peculiarities of temporal data itself, but also the associated structures, loading processes and solutions of various kinds.

In any case, important and not to be neglected are the business requirements that underlie all temporal data. From time to time in this series, I will show technologies and related examples that support temporal data.

Like the already published series on PowerDesigner, this series is for myself a kind of diary, a knowledge base and the 'Secret Spice', in which I record my discoveries and for myself documented tips and tricks around temporal data. I would like to share this with you.

And, as always, you'll meet team members from the Data Management Center of Excellence (DMCE) at my favorite client: FastChangeCoTM!

-

Temporale Daten Serie

/ SECRET SPICE - Temporale Daten Serie

Temporale Daten - Eine Zeitreise

In loser Folge veröffentliche ich meine Gedanken und Erfahrungen rund um bitemporale oder genereller temporalen Daten. Dazu gehört für mich nicht nur die Eigenschaften und Eigenheiten der temporalen Daten an sich, sondern auch die zugehörigen Strukturen, Ladeprozesse und Lösungsansätze unterschiedlichster Art.

Auf jeden Fall wichtig und nicht zu vernachlässigen sind die Geschäftsanforderungen, die allen temporalen Daten zugrunde liegen. Ab und an zeige ich in dieser Serie Technologien und dazu passende Beispiele, die temporale Daten unterstützen.

Wie auch die bereits veröffentliche Serie zum PowerDesigner, ist diese Serie für mich selbst eine Art Tagebuch, eine Wissensbasis und das ‘Secret Spice’, in der ich meine Entdeckungen und meine für mich selbst dokumentierten Tipps und Tricks rund um temporale Daten festhalte. Daran möchte ich euch teilhaben lassen.

Und ihr trefft wie immer auf Team-Mitglieder des Data Management Center of Excellence (DMCE) bei meinem liebsten Kunden: FastChangeCoTM!

-

The Data Doctrine

Message: Thank you for signing The Data Doctrine!

What a fantastic moment. I’ve just signed The Data Doctrine. What is the data doctrine? In a similar philosophy to the Agile Manifesto it offers us data geeks a data-centric culture:

Value Data Programmes1 Preceding Software Projects

Value Stable Data Structures Preceding Stable Code

Value Shared Data Preceding Completed Software

Value Reusable Data Preceding Reusable CodeWhile reading the data doctrine I saw myself looking around seeing all the lost options and possibilities in data warehouse projects because of companies, project teams, or even individuals ignoring the value of data by incurring the consequences. I saw it in data warehouse projects, struggling with the lack of stable data structures in source systems as well as in the data warehouse. In a new fancy system, where no one cares about which, what and how data was generated. And for a data warehouse project even worse, is the practice of keeping data locked with access limited to a few principalities of departments castles.

All this is not the way to get value out of corporate data, and to leverage it for value creation.As I advocate flexible, lean and easily extendable data warehouse principles and practices, I’ll support the idea of The Data Doctrine to evolve the understanding for the need of data architecture as well as of data-centric principles.

So long,

Dirk1To emphasize the point, we (the authors of The Data Doctrine) use the British spelling of “programme” to reinforce the difference between a data programme, which is a set of structured activities and a software program, which is a set of instructions that tell a computer what to do (Wikipedia, 2016).

-

Webinar - Model driven decision making

An EXASOL Webinar serie

We are back again after a long time, with a new webinar. The last one, we (Mathias and I) did together is almost four years ago. Time flies by! What's up this time?

The fictitious company FastChangeCoTM has developed a possibility not only to manufacture Smart Devices, but also to extend the Smart Devices as wearables in the form of bio-sensors to clothing and living beings. With each of these devices, a large amount of (sensitive) data is generated, or more precisely: by recording, processing and evaluating personal and environmental data.